Introduction

Bagua is a distributed training utility developed by AI Platform@Kuaishou Technology and DS3 Lab@ETH. Users can extend the training on a single GPU to multi-GPUs (maybe across multiple machines), with excellent speedup guarantee, by simply adding a few lines of code. Bagua also provides a flexible system abstraction that supports state-of-the-art system relaxation techniques of distributed training. Powered by the new system design, Bagua has a great ability to implement and extend various state-of-the-art distributed learning algorithms. Researchers can easily develop new distributed training algorithms based on bagua, without sacrificing system performance.

So far, Bagua has integrated primitives including

- Centralized Synchronous Communication (AllReduce)

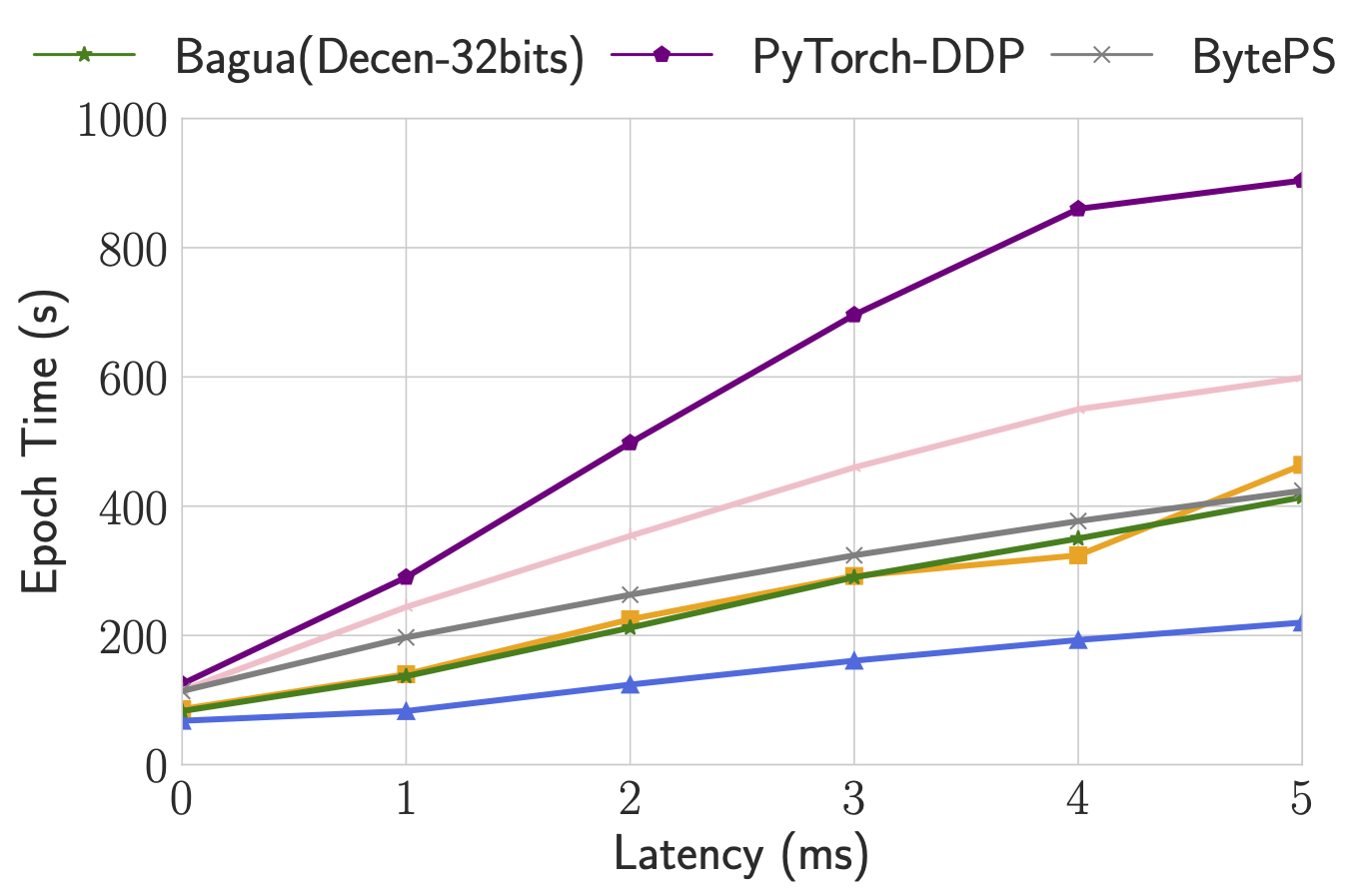

- Decentralized Synchronous Communication

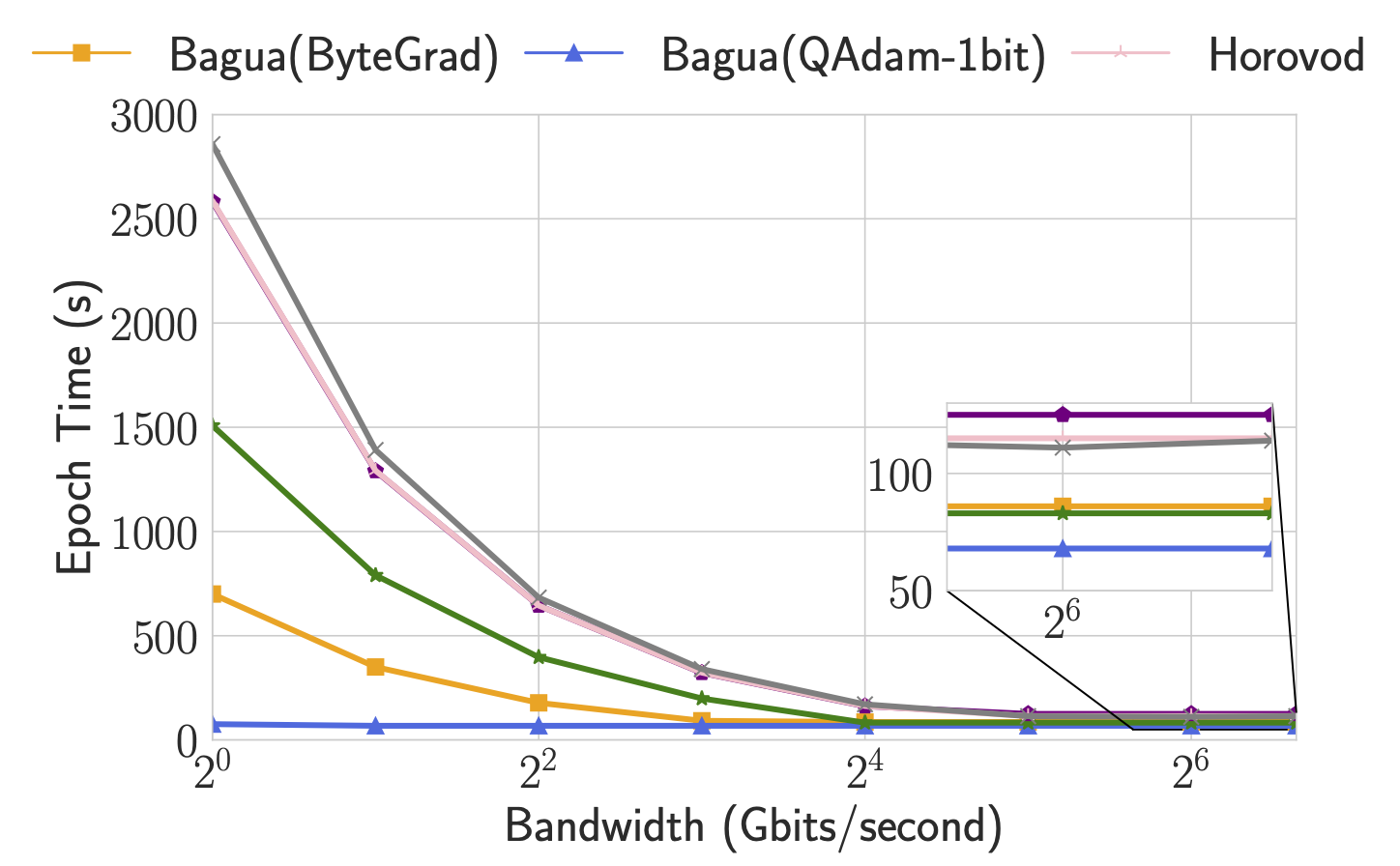

- Low Precision Communication

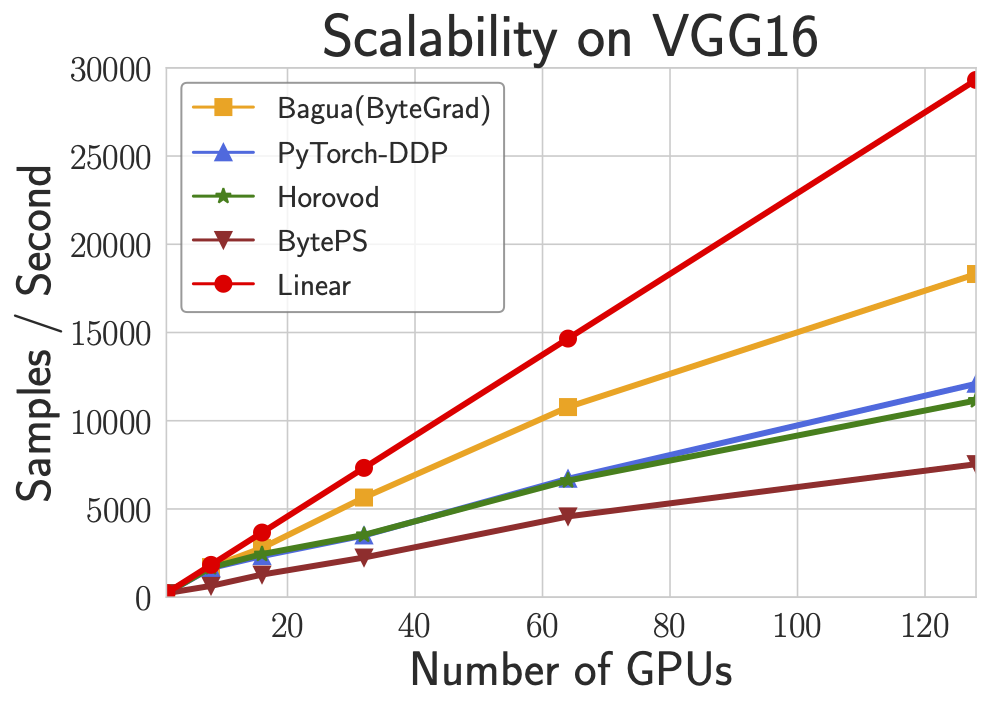

Its effectiveness has been validated in various scenarios and models, including VGG and ResNet on ImageNet, Bert Large, and multiple huge scale industrial applications at Kuaishou such as

- the recommendation system supporting model training with dozens of TB parameters,

- video/image understanding with >1 billion images/videos,

- ASR with TB level datasets,

- etc.

Performance

For more comprehensive and up to date results, refer to Bagua benchmark page.

Cite Bagua

@misc{gan2021bagua,

title={BAGUA: Scaling up Distributed Learning with System Relaxations},

author={Shaoduo Gan and Xiangru Lian and Rui Wang and Jianbin Chang and Chengjun Liu and Hongmei Shi and Shengzhuo Zhang and Xianghong Li and Tengxu Sun and Jiawei Jiang and Binhang Yuan and Sen Yang and Ji Liu and Ce Zhang},

year={2021},

eprint={2107.01499},

archivePrefix={arXiv},

primaryClass={cs.LG}

}